What is missing today

Long-horizon household sequences, multi-camera manipulation traces, tactile-rich grasp failures, intervention data, and evaluation datasets tied to real deployment constraints.

A high-signal gathering on the next bottleneck in robotics: what data is still missing, what teams need to collect next, and how to connect hardware, teleoperation, annotation, evaluation, and deployment into one real operating loop.

We want one room where labs, startups, operators, and system builders can compare notes on what is still missing from embodied AI datasets: failure data, tactile signals, edge cases, recovery traces, human corrections, fleet feedback, and domain-specific workflows.

Long-horizon household sequences, multi-camera manipulation traces, tactile-rich grasp failures, intervention data, and evaluation datasets tied to real deployment constraints.

Which data modalities matter most now, how far simulation can go alone, where annotation standards still break, and what new benchmarks the ecosystem actually needs.

A clearer map of the robotics data stack, practical collection and annotation patterns, and concrete ways to turn hardware access into training-ready datasets.

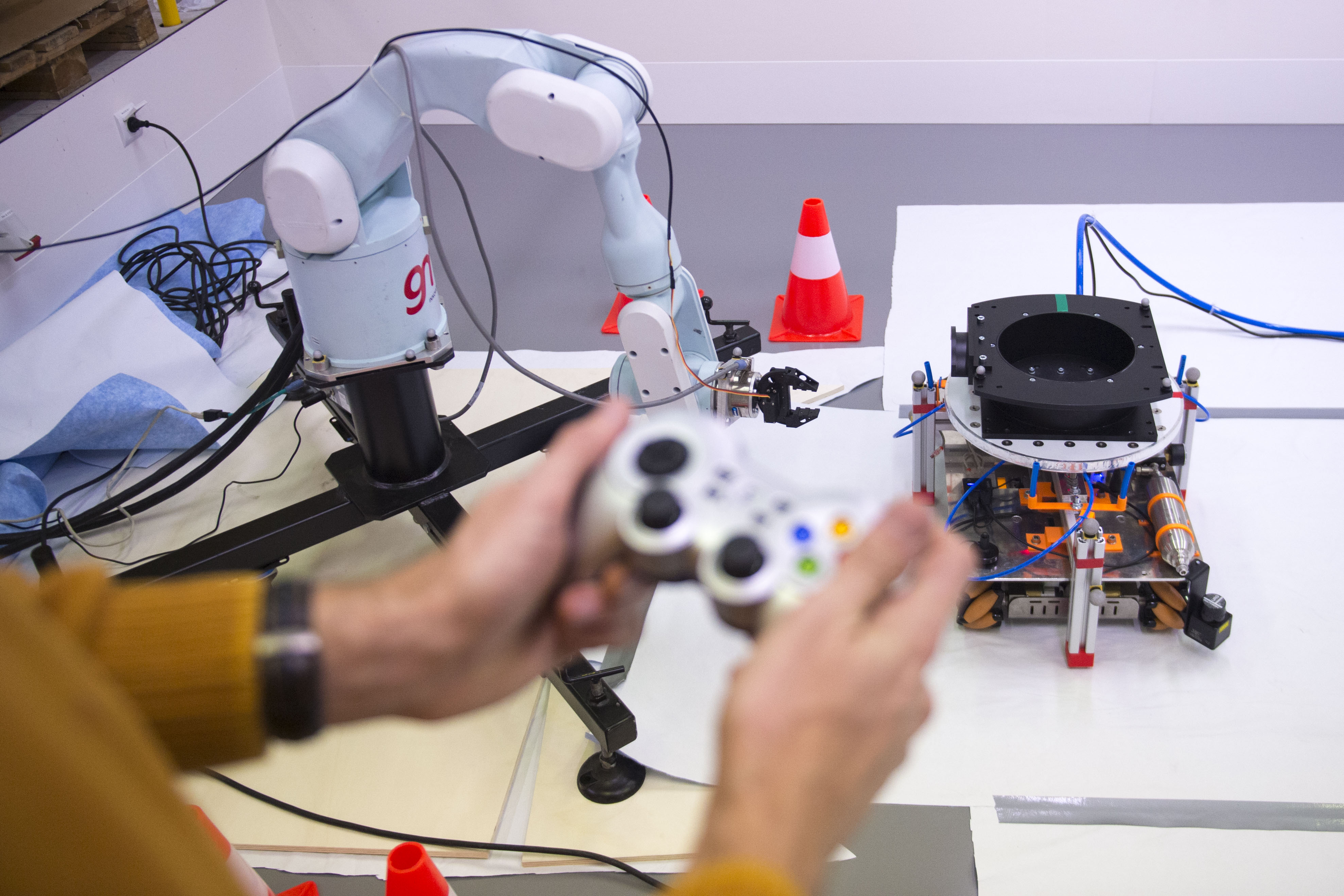

Not just slides. Real robots, data collection rigs, and operator workflows on the floor.

Visible, affordable, and practical for collecting manipulation demonstrations, operator interventions, and rapid task iteration.

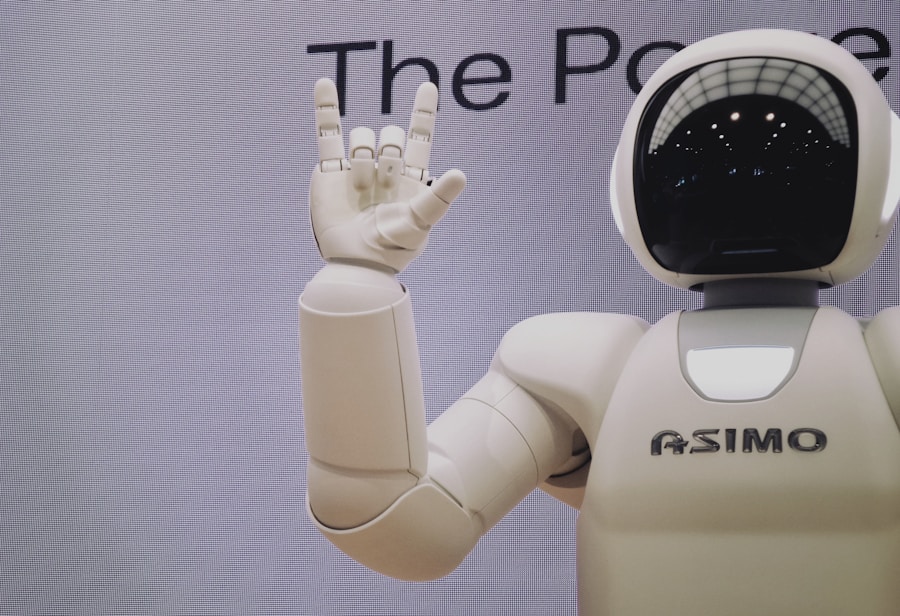

What high-value humanoid data looks like beyond isolated clips: intent, balance, contact, recovery, and supervision cost.

How teams move from demos to repeatable data pipelines with teleop, policy evaluation, annotation QA, and real-world feedback.

Failure traces, uncertainty labels, retries, tactile interactions, and sequences where humans intervene or correct a policy.

Long videos, dense manipulation states, multimodal alignment, policy intent, and event labels that matter operationally rather than visually.

Shared schemas, richer metadata, cross-robot transfer assumptions, teleop provenance, environment tags, and benchmark-ready structure.

Offline QA tied to deployment risk, benchmark coverage for edge cases, and loops that connect rollout evidence back into collection priorities.

We do not want a summit that stops at “data is important.” We want to show the full stack teams can use: hardware access, teleoperation, multimodal capture, structured annotation, QA, and a platform that keeps the loop moving.

OpenArm, humanoids, hands, and mobile systems that generate meaningful interaction data instead of toy-only traces.

Teleop, camera views, state streams, intervention logs, and session metadata that tell you how a task actually unfolds.

Task rubrics, reviewer roles, reject reasons, versioned annotations, and a cleaner path from raw media to learning-ready assets.

Use platform telemetry, episode history, and failure review to decide what to collect next instead of waiting for intuition alone.

Demo floor opens with robot stations, data collection examples, and platform walkthroughs.

Researchers and operators compare the gap between current datasets and real deployment needs.

Short, concrete talks on tactile data, teleop supervision, evaluation gaps, and annotation bottlenecks.

An integrated session on capture, schema, QA, annotation, and storage patterns teams can adopt immediately.

Benchmarks, shared formats, missing modalities, and what could make the next year of robotics data genuinely better.

Meet researchers, hardware teams, data operators, and companies building embodied AI stacks.